AI-Generated Code Is Accumulating Technical Debt: What to Do About It

AI coding assistants are shipping features faster than ever. But the hidden technical debt is piling up in ways that won't surface until it's too late.

Jason Overmier

Innovative Prospects Team

AI-Generated Code Is Accumulating Technical Debt: What to Do About It

Your team is shipping faster than ever. Features that used to take days now take hours. AI coding assistants have become indispensable tools for keeping up with feature requests.

But here’s the uncomfortable question no one’s asking: What happens when the bill comes due?

We’re seeing a pattern across the industry. Teams that adopted AI coding tools without verification processes are accumulating technical debt at an alarming rate. The code works. Tests pass. But beneath the surface, problems are compounding.

The debt won’t show up in your sprint velocity metrics. It will show up six months from now, when a simple feature request turns into a week-long refactoring effort.

The Hidden Crisis

The problem is invisible until it’s not. AI-generated code has a unique property: it appears correct at first glance but often contains subtle issues that compound over time.

What we’re seeing:

| Issue | Why AI Does It | When It Surfaced |

|---|---|---|

| Suboptimal algorithms | Optimizes for correctness, not performance | Under load, at scale |

| Over-engineered patterns | Training data favors complex patterns | Every future change |

| Missing edge cases | Lacks context about your domain | Production edge cases |

| Inconsistent patterns | Each prompt generates different style | Team onboarding, refactors |

| Security blind spots | Not trained on latest vulnerabilities | Security audits, breaches |

Real example: A startup used AI to generate their payment processing logic. It worked perfectly in testing. Six months later, they discovered race conditions in refund handling that had cost them $40K in lost transactions. The AI had generated code that handled the happy path but missed edge cases in concurrent operations.

A senior developer would have caught this during code review. But the team trusted the AI output without scrutiny.

Quick Assessment: Do You Have AI Debt?

Use this checklist to assess your risk level:

Red Flags (Present = High Risk)

- Code reviews rarely question AI-generated code

- Team accepts AI suggestions without reading them fully

- No architecture review for AI-generated modules

- Performance testing happens after deployment

- Different developers use different AI prompts

- No documented AI usage guidelines

- Security reviews skip AI-generated code

- Tests were also AI-generated without verification

0-2 checks: You’re managing AI debt well 3-5 checks: Debt is accumulating, act soon 6-8 checks: Crisis mode, remediation needed

Why AI Code Accumulates Debt

AI coding assistants are trained on open-source code. They’re excellent at reproducing common patterns. But they lack context about your specific domain, architecture decisions, and long-term maintainability goals.

The fundamental mismatch:

| What AI Optimizes | What Production Requires |

|---|---|

| Working code | Maintainable code |

| Common patterns | Architectural consistency |

| Quick implementation | Scalable solutions |

| Passing tests | Edge case handling |

| Feature completion | Operational excellence |

The compounding effect:

Each AI-generated module that doesn’t follow your architectural patterns adds friction. Every over-engineered solution that looked clever becomes a liability. Each missing edge case surfaces as a production incident.

The debt accumulates silently because individually, each issue seems minor. It’s the aggregate that becomes overwhelming.

The Four Types of AI Debt

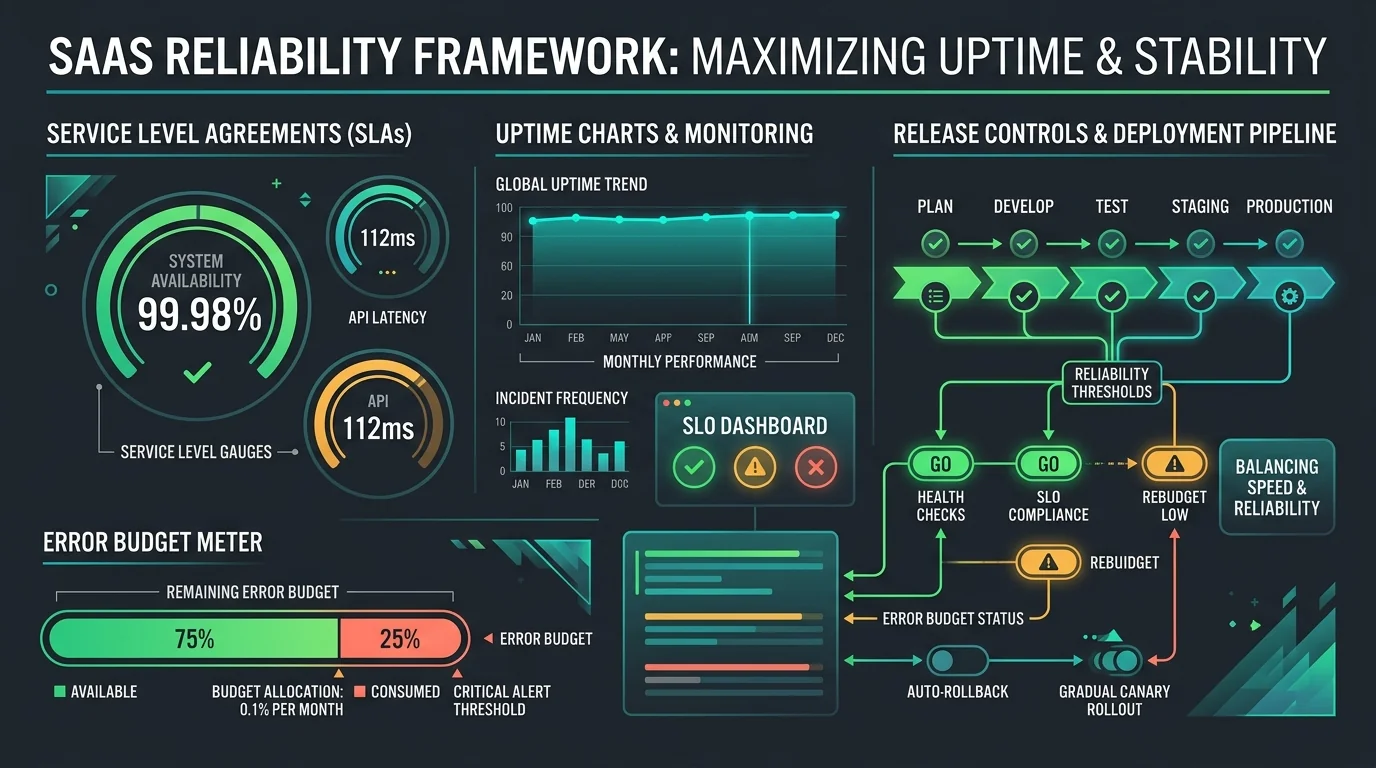

1. Performance Debt

AI often generates inefficient solutions. They work, but they don’t scale.

// AI-generated: N+1 query problem

async function getUserOrders(userId: string) {

const user = await db.users.findOne(userId);

const orders = [];

for (const orderId of user.orderIds) {

orders.push(await db.orders.findOne(orderId));

}

return orders;

}

// Production-ready: Single query

async function getUserOrders(userId: string) {

return db.orders.find({ userId });

}The first version works fine with 100 users. At 10,000 users, your database crawls.

2. Architectural Debt

AI doesn’t know your architectural decisions. It generates code that matches its training data, not your system design.

What this looks like:

- Mixing state management patterns (Redux + Context + local state)

- Inconsistent error handling strategies

- Some modules use TypeScript strictly, others use

any - Import paths that bypass your established abstractions

Each inconsistency makes the codebase harder to reason about.

3. Security Debt

AI models train on public code, much of which lacks security best practices. They generate code that’s functionally correct but security-vulnerable.

Common issues we find:

- SQL injection vulnerabilities in query builders

- Missing authentication checks on API endpoints

- Sensitive data logged in error messages

- Cryptographic operations using deprecated algorithms

- Dependency versions with known CVEs

4. Testing Debt

When you use AI to generate both code and tests, you get a false sense of confidence. The tests pass because they were generated to pass the code, not to validate its behavior.

The problem:

- Tests cover the happy path, not edge cases

- No integration tests, only unit tests

- Mocks that return fake data rather than simulating real failures

- No performance or load testing

When bugs surface in production, your tests gave you no warning.

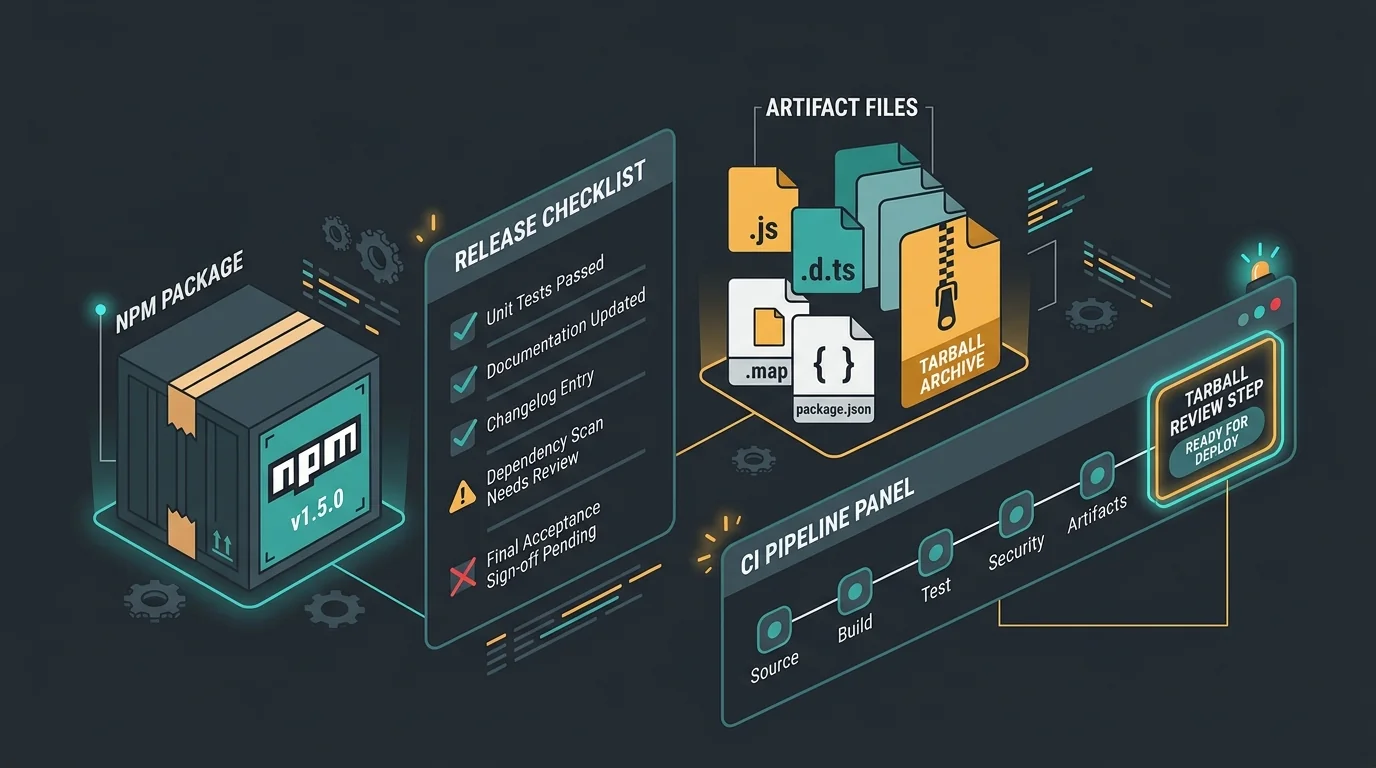

The Verification Gap

Here’s the core issue: AI coding tools have created a verification gap in the development workflow.

Traditional workflow:

Developer writes code

↓

Code review catches issues

↓

Tests validate behavior

↓

DeploymentAI-assisted workflow (dangerous version):

Developer accepts AI suggestion

↓

Code review assumes AI is correct

↓

AI-generated tests pass

↓

Deployment with hidden issuesThe code review step has broken down because reviewers assume AI-generated code is correct. The testing step has broken down because tests are also AI-generated.

What’s missing: A dedicated verification layer that treats AI-generated code as what it is: untrusted input that requires validation.

Common Pitfalls

| Pitfall | Why It Happens | Fix |

|---|---|---|

| Accepting suggestions blindly | AI appears authoritative, developers trust it | Treat AI code like untrusted third-party code |

| Skipping architecture review | Generated code works immediately | Require architecture review for all AI-generated modules |

| No AI usage standards | Each developer uses different prompts | Document prompt patterns and architectural guidelines |

| Using AI for tests | Seems efficient, tests pass | Generate tests manually based on requirements, not code |

| Ignoring performance | Works fine in development | Performance test all AI-generated code before merge |

| Missing security reviews | Security not visible in functionality | Security review is mandatory for AI-generated code touching data/auth |

Building a Verification Layer

The solution isn’t to stop using AI coding tools. They’re too valuable to abandon. The solution is to add a verification layer that treats AI-generated code with appropriate scrutiny.

Essential components:

1. AI Usage Guidelines

Document how your team should use AI tools:

- Which types of tasks are appropriate for AI assistance

- Required prompt patterns to maintain consistency

- Architectural constraints AI must follow

- Prohibited use cases (security, critical paths)

2. Verification Checklist

Every AI-generated code change should pass verification:

## AI-Generated Code Verification

- [ ] Code fully read and understood by human

- [ ] Architecture reviewed for consistency

- [ ] Performance implications assessed

- [ ] Security review completed (if applicable)

- [ ] Edge cases identified and tested

- [ ] Documentation updated

- [ ] Tests written manually, not AI-generated3. Enhanced Code Review

Code reviews for AI-generated code should be more thorough, not less:

- Require the reviewer to explain what the code does

- Question architectural decisions

- Request optimization for performance-critical paths

- Validate edge case handling

4. Periodic AI Debt Audits

Schedule quarterly audits focused on AI-generated code:

- Review all AI-assisted changes since last audit

- Identify accumulating patterns of debt

- Refactor inconsistent patterns

- Update AI usage guidelines based on learnings

The AI-Augmentation Audit

This is where we come in. We’ve developed an audit service specifically for teams using AI coding tools.

What we review:

| Component | What We Check |

|---|---|

| Architecture | Consistency patterns, integration quality |

| Performance | Query patterns, algorithmic efficiency, scaling issues |

| Security | OWASP top 10, auth patterns, data handling |

| Testing | Test coverage quality, edge case handling |

| Debt inventory | Categorize and prioritize accumulated debt |

What you get:

- Prioritized remediation plan

- AI usage guidelines customized to your architecture

- Verification process documentation

- Team training on responsible AI usage

ROI: One audit can prevent hundreds of hours of refactoring and production incidents.

When to Schedule an Audit

Schedule immediately if:

- You’ve been using AI tools for 6+ months without verification

- Feature velocity is slowing despite AI assistance

- You’re seeing increasing production incidents

- New developers struggle to understand the codebase

- You’re planning a significant scaling effort

Schedule within 3 months if:

- You recently adopted AI coding tools

- You have some verification but it’s inconsistent

- Your team is growing

Schedule annually if:

- You have mature verification processes

- Your architecture is stable

- Your team is experienced with AI tools

The Path Forward

AI coding tools are here to stay. Used responsibly, they’re powerful accelerants. The risk comes from treating them as replacements for engineering judgment rather than amplifiers of it.

The healthy approach:

- Use AI for speed, human review for quality

- Generate code, but verify architecture

- Ship features, but audit for debt

- Leverage AI, but maintain standards

The teams that thrive will be the ones who build verification processes that let them move fast without accumulating hidden debt.

How We Can Help

We’ve been helping teams build and audit software for 15 years. We understand both the promise of AI tools and the risks of unchecked technical debt.

Our AI-Augmentation Audit service evaluates your AI-generated code for architectural consistency, performance issues, security vulnerabilities, and testing gaps. You’ll get a prioritized remediation plan and guidelines for responsible AI usage.

Don’t wait for the debt to become a crisis. Early detection is far cheaper than emergency rebuilds.

Book an AI audit to assess your technical debt.

Already seeing the effects of AI debt? We can help you prioritize and fix the issues before they become production emergencies.