46% of Developers Don't Trust AI Code: The Verification Crisis

New research shows a massive trust gap in AI-generated code. Here's how to build verification into your workflow without sacrificing velocity.

Jason Overmier

Innovative Prospects Team

Your team uses AI coding tools. Features ship faster. Sprint velocity looks great. But beneath the metrics, something else is happening.

Developers don’t trust the code.

According to Stack Overflow’s 2025 Developer Survey, 46% of developers actively distrust the accuracy of AI tools. Even more revealing: 45% report that debugging AI-generated code is more time-consuming than debugging human-written code.

The trust gap is real, and it’s creating a hidden drag on engineering productivity that doesn’t show up in velocity charts.

The Trust Gap: What the Data Shows

The research reveals a growing divide between AI adoption and actual confidence in generated code.

Key findings:

| Metric | Percentage | What It Means |

|---|---|---|

| Don’t trust AI code accuracy | 46% | Nearly half of developers lack confidence in AI output |

| Find AI debugging more time-consuming | 45% | AI is creating hidden productivity costs |

| Frustrated by “almost right” AI solutions | 66% | AI frequently misses the mark in subtle ways |

The gap between adoption and trust creates a paradox. Teams use AI tools because they’re expected to, but developers compensate by spending excessive time reviewing code they don’t fully trust.

The real cost: When developers spend more time reviewing AI code than writing it, you’ve lost the productivity gains. You’re paying twice: once for the AI tool, again for the verification overhead.

Why Developers Don’t Trust AI Code

The trust gap isn’t skepticism for its own sake. Developers have legitimate reasons to be wary.

What makes AI code feel untrustworthy:

| Issue | Why It Erodes Trust | Example |

|---|---|---|

| Subtle bugs | Code works in tests, fails in production | Race conditions that only appear under load |

| Wrong patterns | Doesn’t match your architecture | Using Redux when your team standardized on Zustand |

| Missing context | AI doesn’t know your domain | Generating a generic solution when you have specific requirements |

| Security blind spots | Training data predates latest vulnerabilities | Code using deprecated crypto algorithms |

| Illusion of competence | Looks right, behaves wrong | A sorting algorithm that’s correct but O(n²) instead of O(n log n) |

A developer can read code written by a colleague and understand the intent. They can ask questions. They know the colleague’s knowledge level. With AI, the code appears correct but comes with zero context about what the model understood or misunderstood.

This is why developers spend more time reviewing AI code. They’re not just checking logic. They’re reverse-engineering what the AI might have misunderstood.

The Hidden Cost of the Trust Gap

When nearly half your team doesn’t trust the code they’re shipping, you pay hidden costs.

Velocity vs. Confidence:

Traditional velocity metrics measure output: story points shipped, pull requests merged, features delivered. They don’t measure confidence: how much developers believe the code will work in production.

What happens when trust is low:

- Excessive code review time - Reviews of AI code take 2-3x longer than human code

- Defensive programming - Developers add unnecessary safeguards because they don’t trust the implementation

- Slower onboarding - New developers can’t learn from code they don’t understand

- Knowledge silos - Only the developer who accepted the AI suggestion understands why it works

- Deployment anxiety - Deployments become stress events because no one fully understands the changes

The real productivity killer isn’t the bugs in AI code. It’s the cognitive load of constantly working with code you don’t trust.

Building Verification into Your Workflow

The solution isn’t to abandon AI tools. It’s to build verification processes that restore trust without sacrificing velocity.

Verification framework:

1. Establish Trust Boundaries

Not all code requires equal scrutiny. Define trust boundaries based on risk:

| Risk Level | Types of Code | Verification Required |

|---|---|---|

| Low | UI components, utilities, tests | Standard review |

| Medium | Business logic, API endpoints | Enhanced review, manual testing |

| High | Auth, payments, data migrations | Senior review, security audit, load testing |

| Critical | Crypto, regulatory compliance | External audit, formal verification |

Map these boundaries to your codebase. Treat AI-generated code in high and critical categories as untrusted input requiring validation.

2. The AI Code Review Checklist

Standardize what reviewers look for in AI-generated code:

## AI-Generated Code Review

- [ ] I have read every line and understand what it does

- [ ] The code follows our architectural patterns

- [ ] Performance implications have been considered

- [ ] Edge cases are identified and handled

- [ ] Security review completed if applicable

- [ ] Dependencies are up-to-date and licensed

- [ ] Error handling matches our error strategy

- [ ] Tests were written manually, not generatedThe key change: require reviewers to actively affirm they understand the code. This prevents rubber-stamp approvals.

3. Document AI Context

When you accept AI-generated code, document what you verified:

/**

* AI-generated code for user authentication flow.

*

* Verified by: @developer-name

* Date: 2026-01-29

*

* Architecture decisions:

* - Uses our standard JWT pattern from /auth/jwt.ts

* - Implements rate limiting per /docs/rate-limits.md

* - Error responses follow /api/error-format.ts

*

* Security review completed:

* - No SQL injection vectors (parameterized queries)

* - Proper password hashing (bcrypt, cost factor 12)

* - Session tokens stored securely (httpOnly cookies)

*

* Known limitations:

* - Does not handle MFA (future scope)

* - Password complexity rules enforced at application level

*/This documentation helps future developers understand what was verified and why the code looks the way it does.

4. Pair Verification for Critical Code

For high and critical risk code, use pair verification:

- Developer A accepts AI suggestion and documents it

- Developer B reviews independently without seeing A’s documentation

- Compare findings - discrepancies indicate unclear or problematic code

- Only merge when both reviewers reach the same conclusions

This takes more time upfront but prevents expensive production incidents.

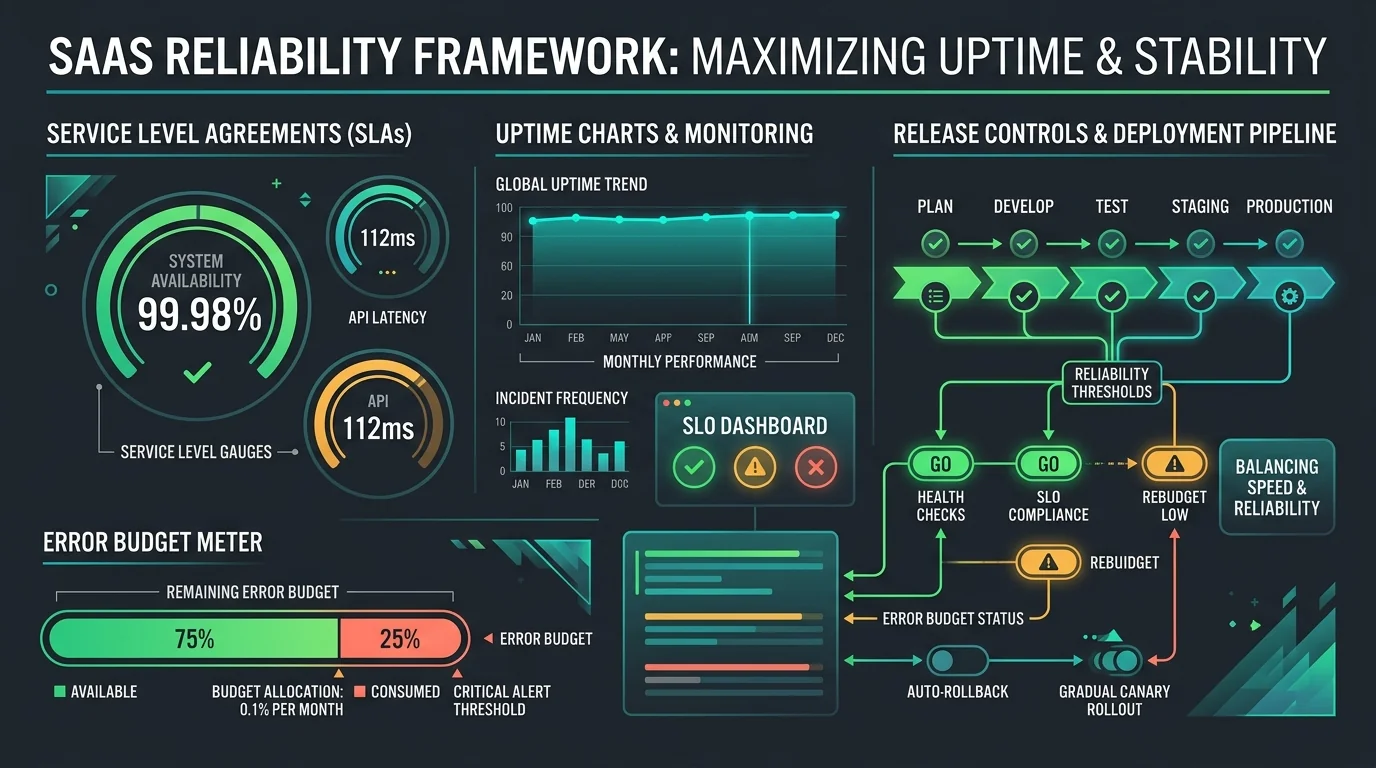

Measuring Trust: Metrics That Matter

You can’t improve what you don’t measure. Track trust-related metrics alongside velocity.

Metrics to watch:

| Metric | How to Measure | What It Tells You |

|---|---|---|

| Review time ratio | AI review time / human review time | Trust gap severity (target: <1.5x) |

| AI bug rate | Bugs from AI code / total bugs | Verification effectiveness |

| Rework rate | AI code requiring significant changes | Acceptance standards |

| Deployment confidence | Pre-deployment anxiety scores | Team trust level |

| Knowledge gaps | Code only one person understands | Documentation needs |

Review time ratio is your leading indicator. If AI code reviews take 2-3x longer than human code, your verification process needs improvement.

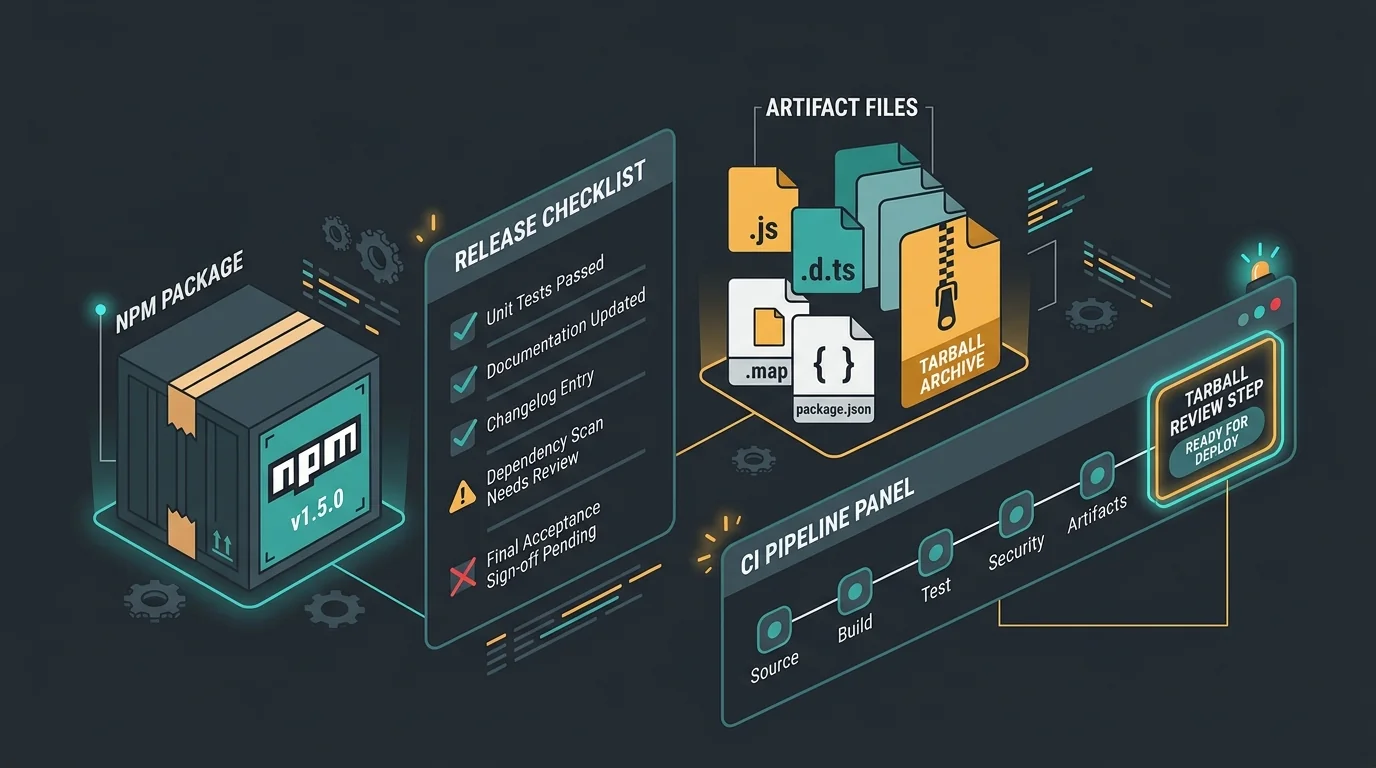

Automation: Trust at Scale

Manual verification doesn’t scale. Build automated verification into your pipeline.

Automated checks to add:

Type Safety and Linting

// biome.json - Stricter rules for AI-generated code

{

"linter": {

"rules": {

"correctness": {

"noUnusedVariables": "error",

"noExcessiveComplexity": "error",

"noFallthroughSwitchClause": "error"

},

"security": {

"noDangerouslySetInnerHtml": "error",

"noGlobalObjectAssignments": "error"

},

"complexity": {

"noForEach": "warn", // Prefer for...of for easier analysis

"noStaticOnlyClass": "warn"

}

}

}

}Dependency Scanning

AI tools often suggest dependencies. Automatically check:

# Run on every PR involving AI-generated code

pnpm audit

npm-check-updates

# Custom script for license compliance

./scripts/check-licenses.shPerformance Profiling

Don’t wait for production to discover performance issues:

// tests/performance/ai-generated.test.ts

import { test, expect } from '@playwright/test';

test('AI-generated search performs acceptably', async ({ page }) => {

const startTime = Date.now();

await page.goto('/search?q=test');

const endTime = Date.now();

// AI code should meet same performance standards as human code

expect(endTime - startTime).toBeLessThan(500);

});Security Scanning

# .github/workflows/ai-verification.yml

name: AI Code Verification

on:

pull_request:

paths:

- '**/*.ts'

- '**/*.tsx'

jobs:

security-scan:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run security scan

run: |

npx codeql analyze

npx snyk test

- name: Check for AI-generated patterns

run: |

./scripts/detect-ai-patterns.shWhen automated verification catches issues before human review, developers trust that the obvious problems have already been filtered out. They can focus their review on architecture and edge cases.

Common Pitfalls

| Pitfall | Why It Happens | Fix |

|---|---|---|

| Treating AI as a senior developer | AI appears authoritative | Treat AI as a junior developer who needs supervision |

| Reviewing output, not intent | Code looks correct | Understand what problem the AI was trying to solve |

| Missing architecture review | Generated code works | Architecture review is mandatory for AI-generated modules |

| Using AI for security code | AI produces working code | Security code should never be AI-generated |

| No documentation of verification | Extra step feels unnecessary | Documenting what was verified creates institutional knowledge |

| Measuring only velocity | Velocity is easy to measure | Track review time ratio and AI bug rate |

| Blaming the AI | Bugs feel like AI’s fault | The developer who accepted the code owns the bugs |

When to Schedule a Verification Audit

If you’re experiencing symptoms of the trust gap, an external audit can help establish baseline verification processes.

Schedule immediately if:

- Review time ratio exceeds 2x for AI code

- You’ve had production incidents from AI-generated code

- Developers express anxiety about AI code in the codebase

- New team members struggle to understand AI-generated modules

- You’re scaling up AI tool adoption

Schedule within 3 months if:

- You recently adopted AI coding tools

- Your team is growing and needs verification standards

- You’re planning to use AI for higher-risk code

Schedule annually if:

- You have mature verification processes

- Your trust metrics are stable

- Your AI usage patterns are well-established

The Path Forward: Trust Through Verification

The 46% trust gap isn’t a reason to abandon AI coding tools. It’s a signal that teams need verification processes that match the speed of AI generation.

The teams that succeed:

- Use AI for velocity, humans for verification

- Measure trust, not just velocity

- Automate the obvious, review the meaningful

- Document what was verified and why

The goal isn’t to trust AI code blindly. The goal is to know exactly what was verified, so trust is earned through process rather than assumed.

How We Can Help

We’ve been helping teams build verification processes for AI-augmented development. Our AI Code Verification Assessment evaluates your current practices, identifies trust gaps, and implements verification frameworks that restore confidence without sacrificing velocity.

You’ll get:

- Trust gap analysis with baseline metrics

- Verification process documentation

- Automated verification pipeline setup

- Team training on AI code review best practices

Book a verification assessment to close your trust gap.

Already experiencing the effects of the verification crisis? We can help you build processes that let your team move fast with confidence.