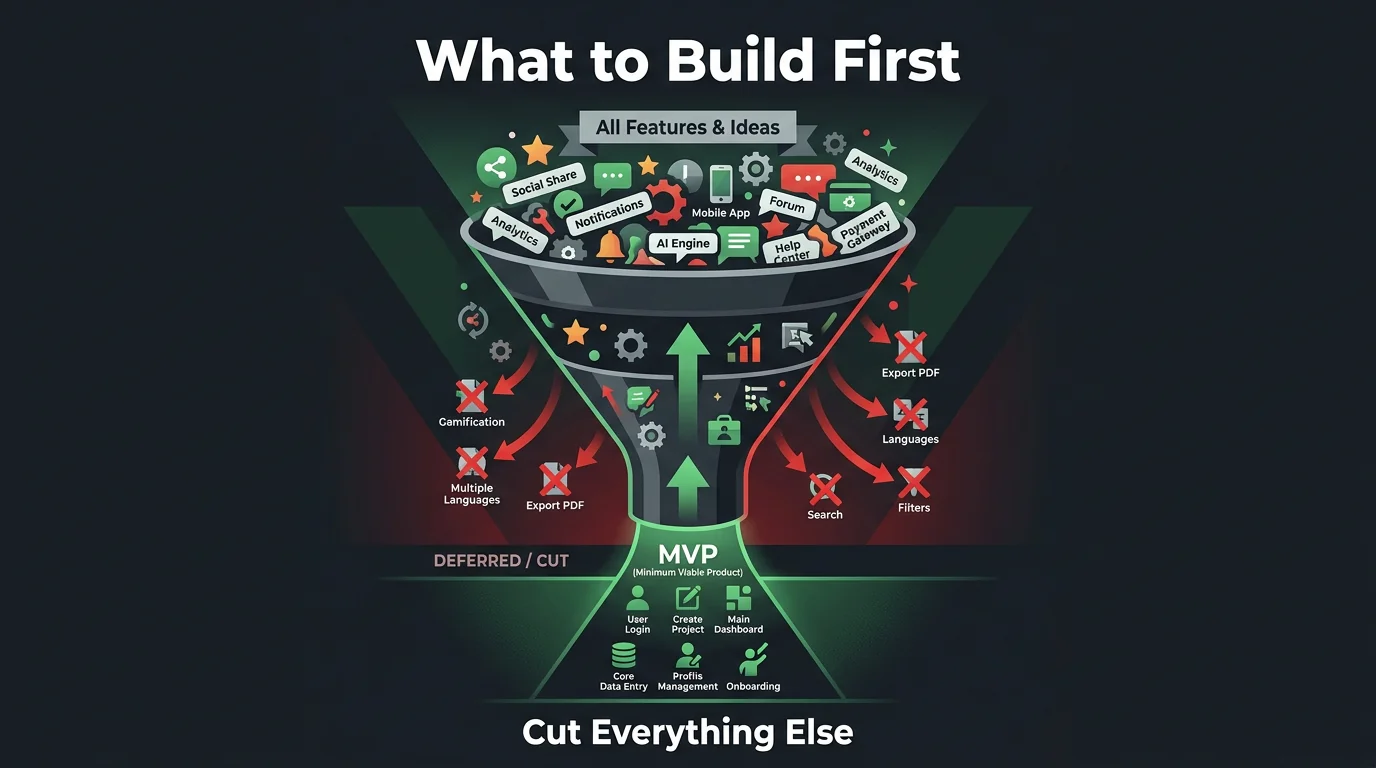

MVP Feature Prioritization: What to Build First

Every feature feels essential when you're building an MVP. Here's a framework for prioritizing what to build first based on customer discovery and learning goals.

Jason Overmier

Innovative Prospects Team

You have a product vision. You’ve talked to potential customers. You know what problem you’re solving. Now comes the hard part: deciding what to build first.

Every feature feels essential. Each one was requested by a potential customer. Each one seems to differentiate you from competitors. But trying to build them all guarantees you’ll ship late, run out of money, or launch something nobody wants.

MVP feature prioritization isn’t about building the “minimum” product. It’s about building the right product to validate your riskiest assumptions.

Quick Answer

| Priority Level | Criteria | Build in MVP? |

|---|---|---|

| Must-have | Core to value proposition, can’t test without it | Yes |

| Should-have | Enhances value, but not required for learning | Maybe |

| Nice-to-have | Would be great, but doesn’t change outcomes | No |

| Won’t-have | Explicitly deferred to post-MVP | No |

The MVP contains only must-haves and critical should-haves. Everything else waits.

The MVP Purpose

What MVPs Are For

| Purpose | What It Means |

|---|---|

| Validate problem-solution fit | Do customers have this problem? Does our solution help? |

| Test riskiest assumptions | What would kill the business if we’re wrong? |

| Start the learning loop | Ship, measure, learn, iterate |

| Conserve resources | Time and money are finite |

What MVPs Are Not For

| Misconception | The Reality |

|---|---|

| Impressing investors | Investors want traction, not features |

| Competing on completeness | You can’t out-feature established competitors |

| Looking professional | Users care if it works, not if it’s polished |

| Revenue immediately | Learning first, revenue follows |

Prioritization Framework

Step 1: List All Features

Start with a comprehensive list of every feature you think you need. Don’t filter yet. Include:

- Features customers requested

- Features competitors have

- Features you think are cool

- Features for “completeness”

Step 2: Identify Your Riskiest Assumption

What has to be true for your product to succeed?

| Assumption Type | Example |

|---|---|

| Problem exists | People will pay to solve this |

| Solution works | Our approach actually helps |

| Acquisition works | We can reach customers affordably |

| Retention works | Users come back after trying |

| Monetization works | We can charge enough to be profitable |

Your riskiest assumption drives your MVP scope. If acquisition is the riskiest assumption, your MVP should include features that enable acquisition tests (referral system, share functionality). If solution is the riskiest, focus on core functionality.

Step 3: Map Features to Assumptions

For each feature, ask: “Does this feature help validate an assumption?”

| Feature | Assumption Tested | Priority |

|---|---|---|

| User registration | Acquisition, retention | Must-have |

| Core workflow | Solution works | Must-have |

| Payment processing | Monetization | Should-have (can test with fake payments) |

| Admin dashboard | Operational | Won’t-have |

| Social sharing | Acquisition | Should-have |

| Advanced settings | Edge case handling | Won’t-have |

Step 4: Apply the MoSCoW Filter

For each feature that tests an assumption:

| Question | If Yes | If No |

|---|---|---|

| ”Can we test without this?” | Nice-to-have or Won’t-have | Must-have |

| ”Is there a simpler way to test this?” | Consider simpler alternative | Keep as-is |

| ”Does this affect 80%+ of users?” | Should-have or Must-have | Nice-to-have |

| ”Can we fake this manually?” | Won’t-have (manual MVP) | Keep as-is |

Feature Categorization

The Core (Must-Have)

Features without which you cannot test your core assumption:

| Characteristic | Example |

|---|---|

| Enables primary workflow | For a task manager: creating and completing tasks |

| Differentiates from alternatives | Unique approach to solving the problem |

| Required for value delivery | Users can’t get value without it |

Include in MVP. These are non-negotiable.

The Enhancers (Should-Have)

Features that improve the experience but aren’t required for learning:

| Characteristic | Example |

|---|---|

| Improves usability | Keyboard shortcuts, better UI |

| Handles edge cases | Error messages, validation |

| Enables secondary workflows | Exporting data, integrations |

Consider for MVP only if they enable critical learning or don’t add significant development time.

The Nice-to-Haves

Features that would be great but don’t affect learning:

| Characteristic | Example |

|---|---|

| Polish items | Animations, themes |

| Advanced features | Power user features |

| Comprehensive coverage | Every possible use case |

Exclude from MVP. Add post-launch based on user feedback.

The Explicit Cuts

Features you commit to not building for MVP:

| Category | Why Cut |

|---|---|

| Admin features | Can be handled manually initially |

| Scale features | You don’t have scale yet |

| Competitive parity | Users don’t switch for parity |

| Future needs | YAGNI (You Ain’t Gonna Need It) |

Document these so stakeholders know they’re deferred, not forgotten.

Practical Examples

Example: B2B Task Management Tool

Vision: Task management for remote software teams with AI-powered prioritization.

All proposed features:

- User registration/authentication

- Task creation/editing/deletion

- AI priority suggestions

- Team workspaces

- Task assignment

- Due dates and reminders

- Time tracking

- Reporting dashboard

- Integrations (Slack, GitHub, Jira)

- Mobile app

- Admin panel

- Custom themes

- API access

Riskiest assumption: AI priority suggestions actually help teams be more productive.

Prioritization:

| Feature | Assumption Tested | Decision |

|---|---|---|

| User registration | Acquisition | Must-have (simplified) |

| Task CRUD | Core workflow | Must-have |

| AI priority | Riskiest assumption | Must-have |

| Team workspaces | Solution context | Must-have (simplified) |

| Task assignment | Core workflow | Must-have |

| Due dates | Core workflow | Should-have |

| Reminders | Retention | Won’t-have (email only) |

| Time tracking | Differentiator | Won’t-have |

| Reporting | Nice-to-have | Won’t-have |

| Integrations | Nice-to-have | Won’t-have |

| Mobile app | Nice-to-have | Won’t-have |

| Admin panel | Operational | Won’t-have (manual) |

| Custom themes | Nice-to-have | Won’t-have |

| API access | Nice-to-have | Won’t-have |

MVP scope: User registration, task management, AI prioritization, team workspaces, task assignment, basic due dates.

Example: Consumer Fitness App

Vision: AI-powered personal trainer that adapts workouts based on performance.

Riskiest assumption: Users will trust AI-generated workouts enough to follow them.

Prioritization:

| Feature | Assumption Tested | Decision |

|---|---|---|

| User registration | Acquisition | Must-have |

| Fitness assessment | Personalization | Must-have |

| AI workout generation | Riskiest assumption | Must-have |

| Workout logging | Retention | Must-have |

| Progress tracking | Retention | Should-have |

| Social features | Acquisition | Won’t-have |

| Meal planning | Nice-to-have | Won’t-have |

| Wearable sync | Nice-to-have | Won’t-have |

| Video demonstrations | Usability | Should-have (static images first) |

MVP scope: Registration, assessment, AI workout generation, workout logging, basic progress tracking.

Technical Debt as Strategy

An MVP is intentionally imperfect. Some debts are strategic:

| Debt Type | Accept in MVP? | Why |

|---|---|---|

| UI polish | Yes | Users care about function, not looks initially |

| Performance (at low scale) | Yes | You don’t have scale yet |

| Edge case handling | Partial | Handle common cases, document edge cases |

| Security | No | Never compromise on security basics |

| Code quality | Partial | Clean enough to iterate, not pristine |

| Testing | Partial | Test critical paths, not everything |

| Documentation | No | Small team, code is documentation |

The key is knowing which debts you’re taking and having a plan to pay them down.

Common Pitfalls

| Pitfall | Why It Happens | The Fix |

|---|---|---|

| Feature creep | Each feature seems small | Ruthless prioritization, explicit won’t-have list |

| Competitor matching | Fear of looking incomplete | Focus on your differentiation, not parity |

| Perfectionism | Wanting to impress | Remember MVP is for learning, not launching |

| User design by committee | Incorporating all feedback | Synthesize feedback, don’t accumulate features |

| Technical over-engineering | Building for scale you don’t have | Build for today’s users, plan for tomorrow’s |

The Concorde Fallacy

Don’t let sunk costs drive feature decisions. If a feature isn’t essential for learning, cut it regardless of how much work has gone into it.

| Sunk Cost | The Reality |

|---|---|

| ”We already designed it” | Design is cheap compared to development |

| ”The code is half-written” | Half-written code costs more to maintain than finish |

| ”Stakeholders expect it” | Manage expectations, don’t build unwanted features |

Decision Documentation

Document your prioritization decisions for future reference:

## MVP Feature: [Feature Name]

**Decision:** Must-have / Should-have / Nice-to-have / Won't-have

**Assumption tested:** [What this feature helps validate]

**Why this priority:**

[2-3 sentences on reasoning]

**Dependencies:** [What else this feature requires]

**Success metric:** [How we'll know if it's working]

**If Won't-have, when revisited:** [Post-MVP / v2 / Never]This documentation helps when features get re-prioritized or stakeholders question decisions.

MVP feature prioritization determines whether you ship something valuable or run out of runway. If you’re planning an MVP and want help prioritizing features for maximum learning, book a consultation. We’ve guided dozens of startups through this process.