How to Security-Review AI Coding Tools Before Rollout

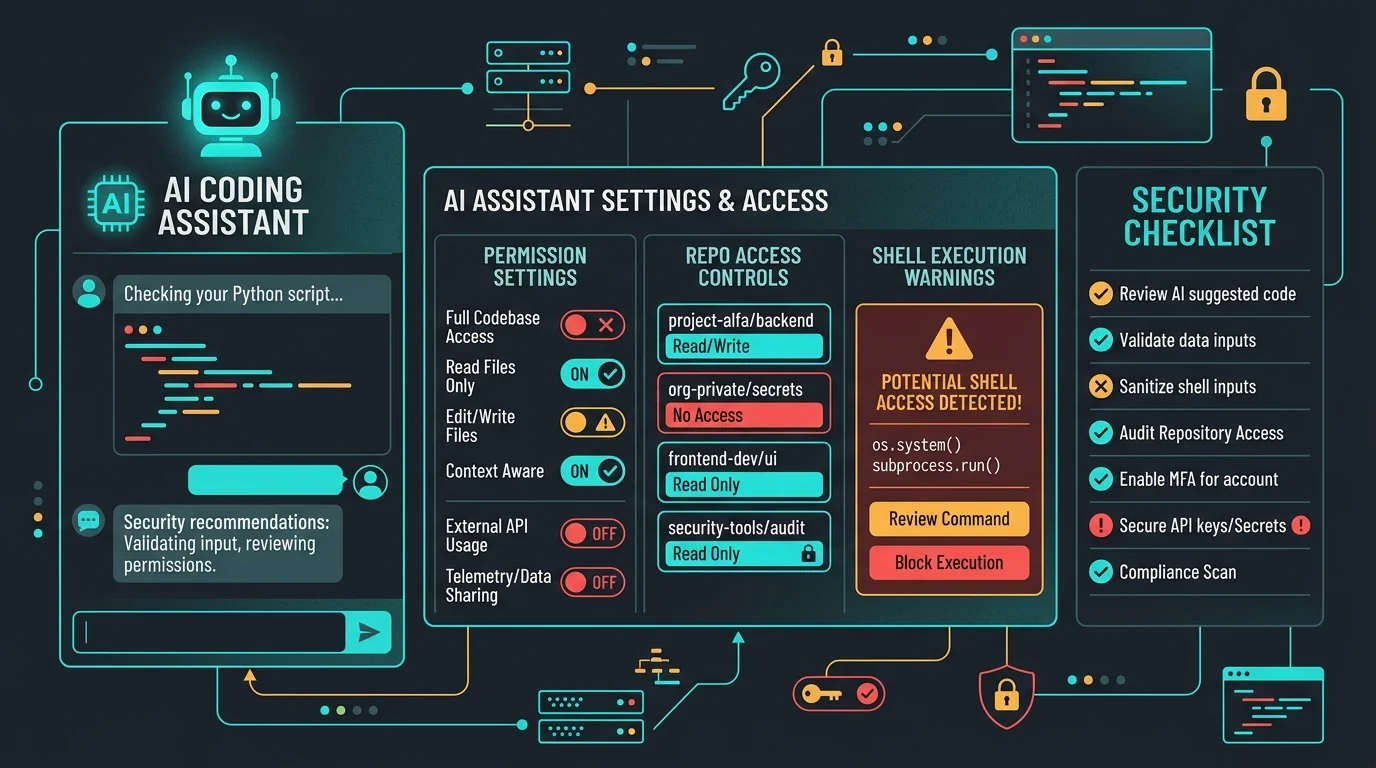

AI coding tools do not just generate code. They can touch repositories, terminals, issue trackers, and internal context. Before you approve one across your team, review it like any other privileged engineering system.

Jason Overmier

Innovative Prospects Team

Most security reviews for AI tools still focus too narrowly on output quality. That matters, but it is not the whole risk model.

Modern AI coding tools may get access to:

- your source repositories

- local files

- shell commands

- build logs

- tickets and docs

- sometimes credentials or production-adjacent context

That makes them privileged developer tooling, not just autocomplete.

Review Checklist

| Area | What to verify |

|---|---|

| Data retention | What prompts, files, and outputs are stored, and for how long |

| Training use | Whether your data is used to improve the vendor’s models |

| Access model | Which repos, files, and tools the product can reach |

| Permission controls | Whether dangerous actions require explicit approval |

| Auditability | What logs exist for prompts, actions, and admin decisions |

| Identity and admin | SSO, SCIM, RBAC, and offboarding support |

Questions Security Teams Should Ask

- Can the tool execute shell commands?

- Can it access private repos or local secrets implicitly?

- Can admins restrict which models, tools, or actions are available?

- What evidence exists if we need to investigate misuse?

- How quickly can access be revoked for a contractor or departing employee?

If a vendor cannot answer those clearly, rollout should slow down.

Internal Guardrails Worth Setting

- define which repositories the tool may access

- block production credentials from developer workstations where possible

- require approval for shell execution or file writes if the tool supports them

- publish prompt hygiene guidance for engineers

- keep manual review requirements for auth, payments, and infra changes

Common Pitfalls

| Pitfall | Why It Happens | Fix |

|---|---|---|

| Teams review the demo, not the permissions | The interface feels like chat | Review real access and retention controls |

| Security asks only about model safety | That is the visible AI question | Review repo, shell, and context boundaries too |

| Rollout happens team-by-team ad hoc | Adoption outpaces governance | Set a standard approval process |

| Offboarding is weak | Tooling was treated like optional productivity software | Require identity and deprovisioning controls |

The Better Framing

An AI coding tool is part editor, part workflow engine, and sometimes part agent. Review it with the same seriousness you would apply to any system that can read internal code and act on a developer’s behalf.

If your team is evaluating AI coding tools and wants a sober security review before rollout, contact us. We help teams assess vendor controls, permission models, and safe adoption boundaries.