The 'Vibe Code' Trap: When AI Prototypes Become Production Nightmares

Your AI-generated prototype works. But under the hood, you've accumulated technical debt that could cost you your product launch.

Jason Overmier

Innovative Prospects Team

You shipped it. The prototype your team built with AI assistance is live, users are signing up, and your board is impressed. The code works.

But you’re starting to notice things. The deployment takes longer each time. The AI-generated authentication module has a race condition nobody can reproduce. Your new hire stares at the codebase and asks who wrote it—and you can’t really answer.

You’ve fallen into the vibe code trap. And the bill is coming due.

What Is “Vibe Code”?

“Vibe code” is code that seems correct because it passes type checking and handles the happy path, but fails under real-world conditions. It’s the inevitable result of rapid AI prototyping without production discipline.

AI tools are exceptional at generating syntactically correct code that solves the specific problem you present. They cannot see the full system context, anticipate edge cases, or make architectural trade-offs. They’re not incompetent—they’re just not there for the 2 AM debugging session when the payment processor times out.

The trap isn’t using AI. The trap is shipping AI-generated code without treating it like the technical debt it inherently is.

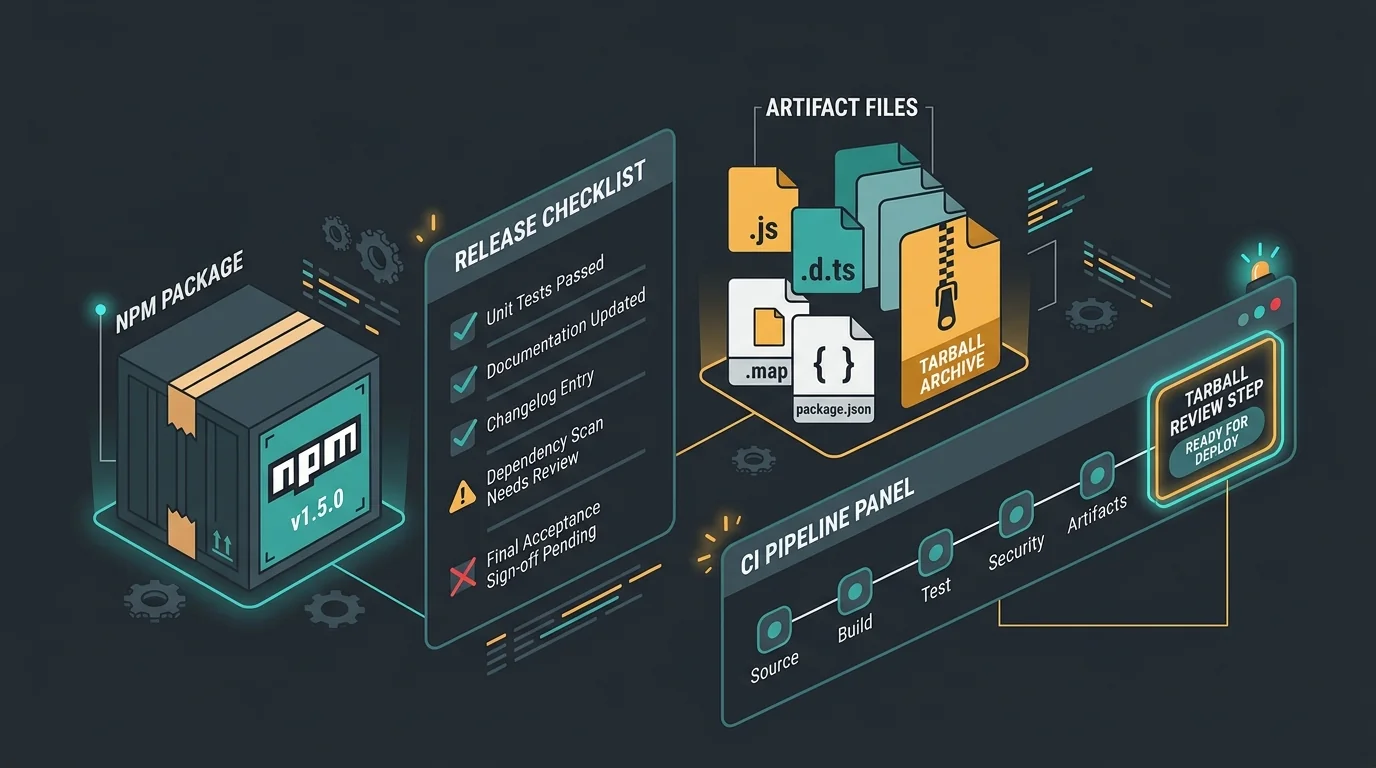

The Production Readiness Checklist

If your team has been shipping AI-assisted code, run through this audit. Every “no” is a red flag.

| Area | Check | Status |

|---|---|---|

| Error Handling | Can any single point of failure cascade across the system? | [ ] |

| State Management | Does the code handle race conditions without stale data? | [ ] |

| Security | Are inputs validated at the boundary, not just in components? | [ ] |

| Observability | Can you trace a request from entry to database without guessing? | [ ] |

| Testing | Do you have integration tests, or just unit tests for happy paths? | [ ] |

| Documentation | Could a new engineer understand the architecture without asking? | [ ] |

Four or more unchecked boxes? You’re not at prototype stage anymore—you’re in production debt.

Why AI Prototypes Accumulate Hidden Debt

AI tools excel at generating code that looks right in isolation. But production systems aren’t a collection of isolated functions—they’re interconnected networks of trade-offs.

The Happy Path Fallacy

When you ask an AI to “write a function that processes payments,” it generates code that processes a payment successfully. What it doesn’t generate:

- Retry logic for transient failures

- Idempotency guards for duplicate requests

- Circuit breakers for downstream outages

- Comprehensive logging for failed transactions

These aren’t edge cases. In production, the unhappy path is the path. A payment integration that succeeds 99% of the time fails one million times per month at scale. Your AI prototype never had to think about that.

The Context Ceiling

AI models have a finite context window. They can see the function you’re working on, maybe the file you’re editing, but they cannot hold your entire system architecture in working memory.

This leads to locally optimal decisions that are globally disastrous:

- Duplicate authentication logic because the AI didn’t know a module existed

- Inconsistent error handling because each prompt was solved in isolation

- Tight coupling between components because nobody enforced separation of concerns

The code works. But every change requires touching five files that should have been unrelated.

The Illusion of Velocity

You shipped features twice as fast. Your velocity charts look incredible. But velocity measures output, not sustainability.

Technical debt compounds. The shortcuts you took to ship quickly will cost you 3x to fix later. You’re not ahead—you borrowed against your future development capacity.

The Signs Your AI-Built Codebase Is in Trouble

You don’t have to wait for the production outage. Watch for these leading indicators.

Code Review Fatigue

Your engineers spend more time understanding what the code does than whether it should do it that way. AI-generated code often lacks coherent intent—every function was written by a different “author” with different assumptions.

Reviews become guesswork. Is this pattern intentional? Did the AI hallucinate a library function? Nobody knows, so everyone approves defensively.

The “Works on My Machine” Epidemic

AI-generated code often relies on implicit assumptions—environment variables, database state, timing. These assumptions hold in the development environment but break in production.

Your bug backlog fills with “intermittent” issues that nobody can reproduce. That’s not a mystery. It’s a sign your code is brittle.

Ramp-Up Time Explodes

New hires should be productive within weeks. In AI-heavy codebases, it takes months because there’s no coherent architectural vision to learn—just a collection of generated solutions.

Senior engineers leave because they’re tired of maintaining code nobody understands. Knowledge becomes tribal, concentrated in whoever happened to prompt the AI.

What Production Hardening Actually Means

“Production hardening” isn’t a buzzword. It’s the systematic process of turning prototype code into production systems.

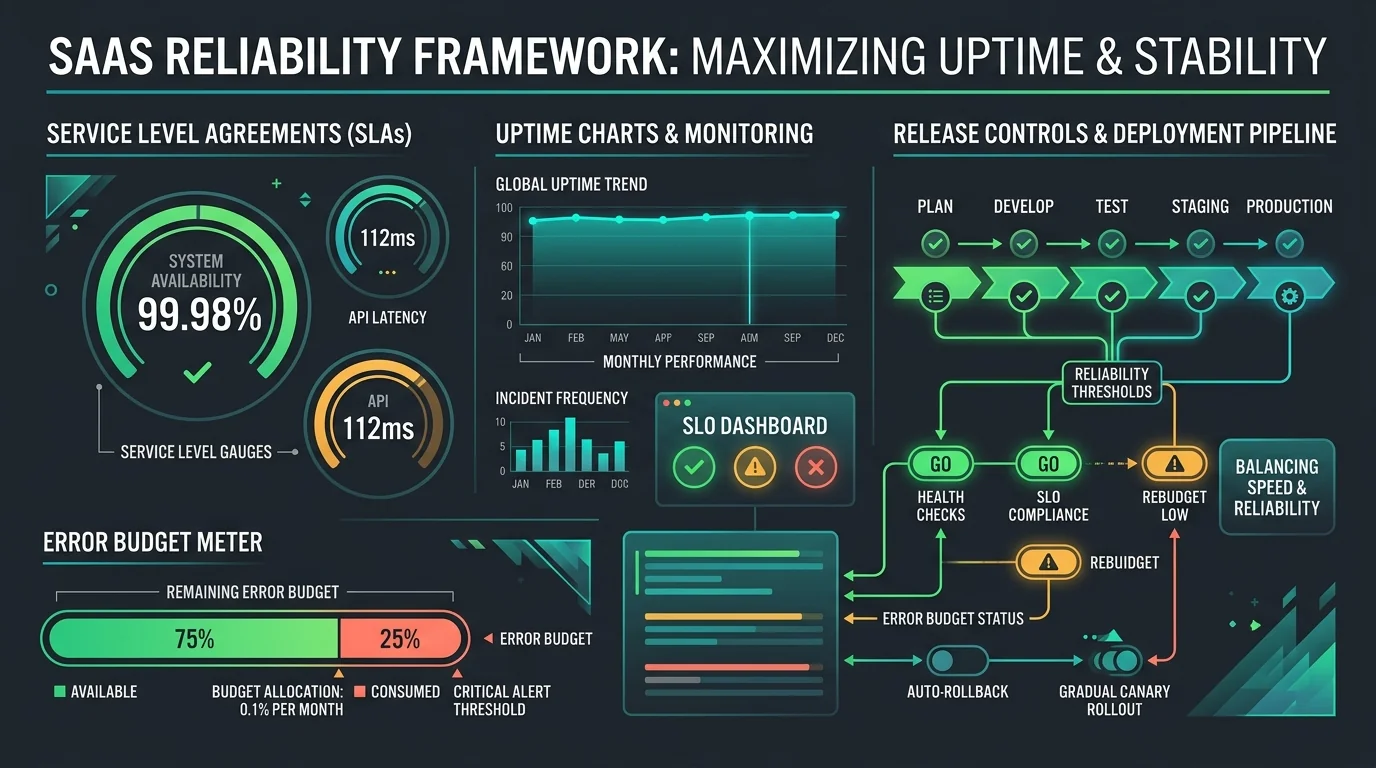

Hardening Layer 1: Observability

You cannot fix what you cannot see. Production systems need structured logging, distributed tracing, and metrics at every integration point.

AI-generated code typically has console.log statements or no logging at all. Hardening means adding:

- Request IDs that trace across services

- Structured logs with consistent schemas

- Metrics for latency, error rates, and saturation

- Dashboards that show system health at a glance

Hardening Layer 2: Resilience

Production systems fail. The question is whether they fail gracefully or catastrophically.

Hardening adds:

- Retry logic with exponential backoff

- Circuit breakers to prevent cascading failures

- Timeouts at every boundary

- Graceful degradation when dependencies are unavailable

The AI generated the happy path. Hardening adds the unhappy paths that keep you in business.

Hardening Layer 3: Security

AI models follow security best practices when prompted, but they cannot anticipate your specific threat model. Hardening means:

- Input validation at every boundary

- Principle of least privilege for service accounts

- Secrets management, not environment variables

- Dependency scanning and vulnerability monitoring

The prototype didn’t need to be secure. Production does.

Hardening Layer 4: Testing

Unit tests verify code does what you wrote. Integration tests verify code does what you meant. AI-heavy codebases often have the former and lack the latter.

Hardening adds:

- Contract tests for API boundaries

- Integration tests for critical paths

- Load tests to find breaking points

- Chaos tests to verify resilience

You find bugs in testing, or your users find them in production. Choose wisely.

The Cost of Waiting

Every month you postpone hardening, the debt compounds.

- Month 3: Code reviews take 2x longer. Velocity drops.

- Month 6: Intermittent bugs consume 40% of engineering time.

- Month 9: Senior engineer leaves. Knowledge loss creates panic.

- Month 12: Major outage. Postmortem reveals systemic issues.

The hardening work you deferred is now an emergency rewrite. You’re not shipping features anymore. You’re digging out of technical debt.

The irony? AI accelerated the prototyping phase but extended the time to production stability. You didn’t save time—you moved the work to a more expensive phase.

Common Pitfalls

| Pitfall | Why It Happens | Fix |

|---|---|---|

| Treating AI output as final | AI generates plausible code that passes type checking | Review AI code like a junior engineer’s work—assume it needs hardening |

| Adding features before hardening | Prototype works, stakeholders want more, urgency to ship | Set a hardening gate before adding new functionality to the system |

| No architectural oversight | Each prompt solved in isolation, no system-level coherence | Establish architectural patterns and enforce them across all AI-generated code |

| Skipping documentation | Code was generated quickly, documentation feels like overhead | Treat docs as part of the hardening process—document architecture, not just functions |

| Assuming tests prove production readiness | Unit tests pass for happy paths, edge cases untested | Add integration, load, and chaos tests before calling anything production-ready |

The Path Forward

You don’t need to stop using AI. You need to use it differently.

Treat AI-generated code as draft material, not production code. The AI is the fastest junior engineer you’ve ever had—brilliant at implementation, zero experience with production realities. That’s a valuable tool if you manage it correctly.

Establish a hardening gate between prototype and production. Every feature crosses that gate with observability, resilience, security, and testing in place. The gate is non-negotiable.

Your prototype shipped. Now decide: pay the hardening cost on your timeline, or wait until the system decides for you.

Your AI-assisted codebase works today. Will it work when you scale?

We’ve helped companies transition from AI prototypes to production-hardened systems. Our production hardening service adds observability, resilience, and security to your existing codebase without stopping feature development.

Book a free consultation to get a production readiness assessment.